Any future healthcare stimulus funding, or HITECH Act 2.0, efforts need to focus on tools and resources that can build a bridge from health IT to public health and precision public health efforts targeted at the communities hardest hit.

Webinar – Precision Medicine and Health IT

In this webinar, Chilmark Senior Analyst and lead report author Jody Ranck presented an overview...

Precision Medicine: New Data, New Challenges, New Insights

Precision medicine (PM) is of growing importance in healthcare and was the focus of a major push...

Signaling the Tipping Point of AI Value in Healthcare

A brief recap of the World Medical Innovation Forum 2018 Top minds across a broad spectrum of...

One More Step in the Long Road of Precision Medicine

CMS decision removes important barrier for some Medicare cancer patients to access next generation...

Top 7 Things to Look for at HIMSS17

The final countdown has begun. In a few short days I and the rest of the Chilmark Research team will make our annual pilgrimage to the big health IT confab, HIMSS17, to rub shoulders with some 45,000 of our closest friends.

Bridging Genomics-Health IT Gap for Precision Medicine

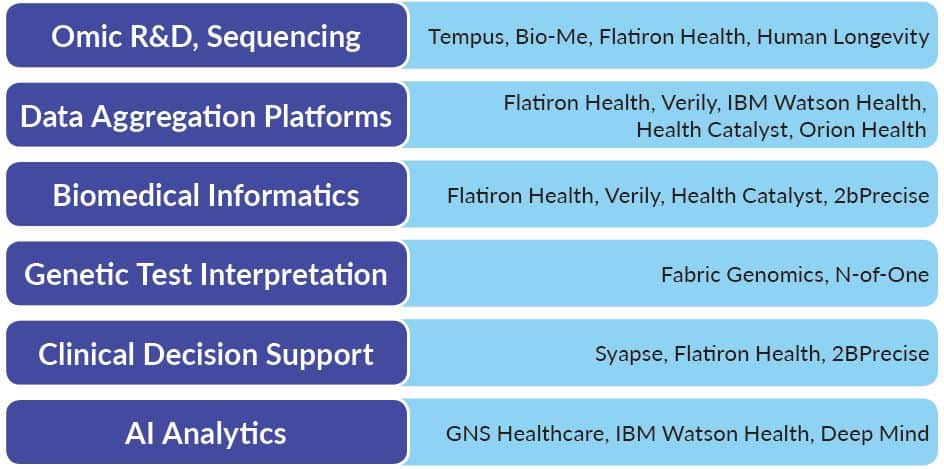

What are the real challenges to making precision medicine a reality? Given challenges associated with implementations & interoperability, how will healthcare begin to address an additional stakeholder whose data sets are much larger and bring these insights into the clinic in an actionable way?